Building Trust: Humans & AI

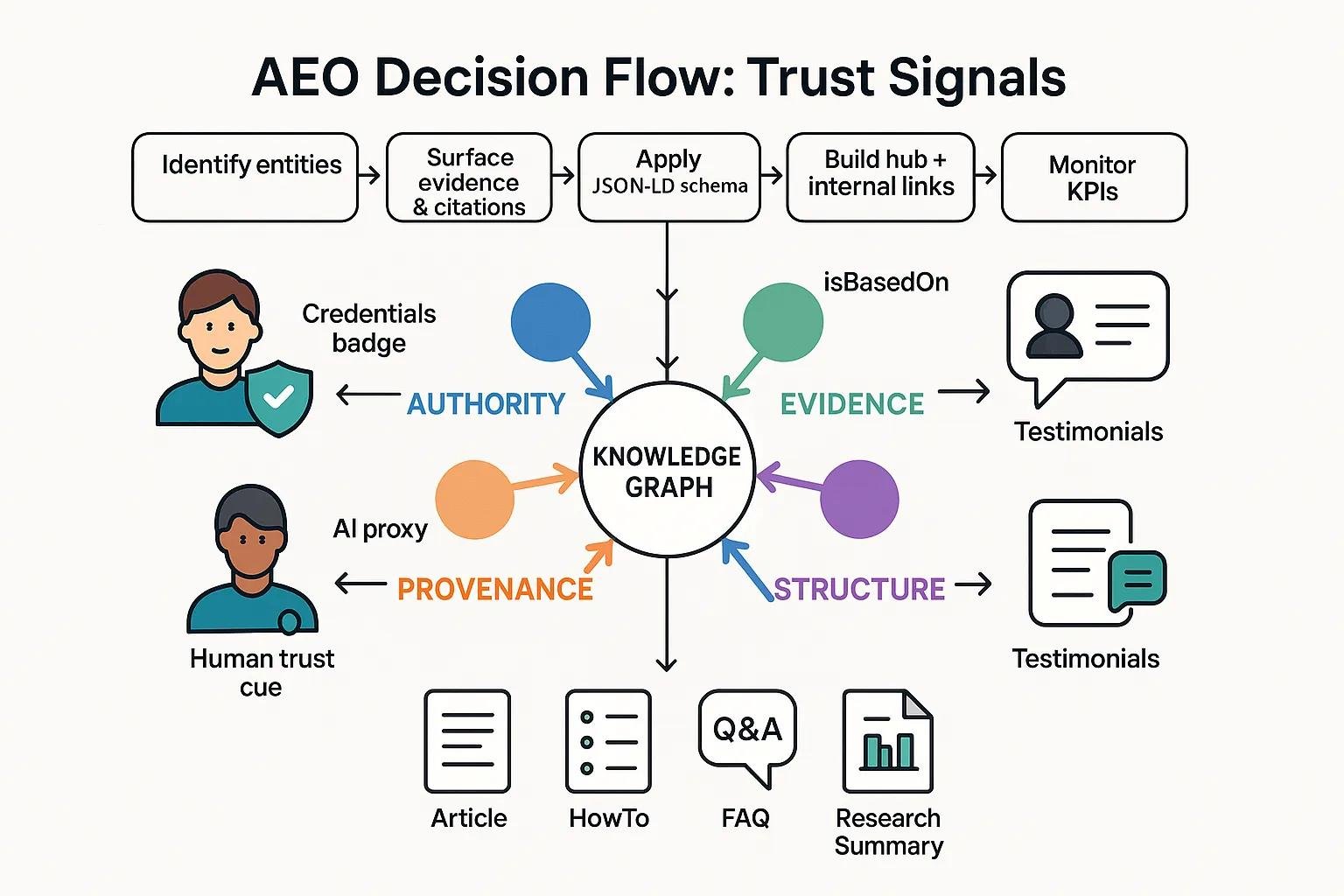

Answer Engine Optimization (AEO) is the practice of structuring content so AI systems and large language models (LLMs) recognize, trust, and cite it as authoritative, which directly increases visibility in AI-generated answers. This article teaches how human trust psychology maps to algorithmic trust signals, what specific signals matter to models, and which semantic and technical tactics deliver measurable citation gains. Many teams struggle because conventional SEO favors keywords and links, while AEO demands entity clarity, provenance, and answer quality; this guide solves that gap with step-by-step strategies, schema examples, and KPI templates. Readers will gain both the conceptual mapping—how authority, social proof, and transparency translate into machine-evaluable features—and the practical toolkit—semantic SEO patterns, structured-data snippets, audit tables, and monitoring cadences.

We will cover:

- what AEO is and its core trust signals

- psychological underpinnings and their algorithmic proxies

- concrete AEO tactics including semantic writing and schema

- ethics and human oversight

- measurement and audit practices

- case comparisons and model-differentiation

- future trends in human-AI collaboration for AEO

What Is Answer Engine Optimization and Why Does AI Trust Matter?

Answer Engine Optimization (AEO) is the deliberate design of content so AI systems return it as a trusted answer by emphasizing entities, provenance, and concise answers. The mechanism is simple: models prefer content with identifiable entities, explicit evidence, and clear structure, which increases the chance of being cited in AI-generated answers. The benefit is higher referral volume and authoritative placement in LLM-driven responses, improving discoverability beyond traditional search engine result pages (SERPs). Understanding AEO starts with contrasting it to traditional SEO signals and listing the core trust attributes that models evaluate, which we explore next.

How Does AEO Differ from Traditional SEO?

AEO differs from traditional SEO by privileging entity clarity and answer quality over keyword density and link count, and by optimizing for AI citation behavior rather than purely organic ranking. Traditional SEO optimizes for queries, ranking positions, and backlinks, while AEO optimizes for extractable answers, provenance, and structured evidence that LLMs can parse and cite. This shift changes content production: authors must declare entities, embed verifiable references, and format concise answers for generative consumption. The implication is that teams should prioritize semantic clusters and canonical entity pages to serve both human readers and AI agents.

What Are the Core Trust Signals AI Looks for in Content?

AI evaluates a short set of trust signals that act as proxies for human credibility: identifiable authority (recognized entity names), evidence and provenance (citations, primary data), structure and clarity (schema and headings), and freshness or stability (update metadata and versioning). Models infer competence from entity recognition, infer honesty from citation frequency and provenance markers, and infer reliability from consistent updates and canonicalization. These signals combine into algorithmic heuristics that increase the probability an LLM will select and cite a piece of content as an answer source. Understanding these signals enables targeted AEO tactics, which we outline below.

Why Is Human Psychology Key to Training AI Algorithms?

Human psychology matters because many algorithmic trust heuristics are derived from patterns in human-authored corpora where credibility cues appear consistently, and models learn to weight those cues. Psychological heuristics—authority, social proof, cognitive fluency—appear in text as author bylines, citations, readable structure, and testimonial evidence; models use those patterns as proxies for trust. The value is practical: by deliberately encoding psychological trust cues into content, publishers can influence how models perceive and prioritize their material. Mapping these human heuristics to explicit signals is the next essential step.

Autonomous Schema Markups for SEO with AI and Semantic Technology

With advances in artificial intelligence and semantic technology, search engines are integrating semantics to address complex search queries to improve the results. This requires identification of well-known concepts or entities and their relationship from web page contents. But the increase in complex unstructured data on web pages has made the task of concept identification overly complex. Existing research focuses on entity recognition from the perspective of linguistic structures such as complete sentences and paragraphs, whereas a huge part of the data on web pages exists as unstructured text fragments enclosed in HTML tags. Ontologies provide schemas to structure the data on the web. However, including them in the web pages requires additional resources and expertise from organizations or webmasters and thus becoming a major hindrance in their large-scale adoption. We propose an approach for autonomous identification of entities from short text present in web pages

How Do Humans Build Trust and How Does AI Mimic These Signals?

Trust between humans forms on dimensions of competence, honesty, consistency, and benevolence, and each dimension has direct analogues in digital content that AI models can detect. Humans infer competence from credentials and track record; AI infers competence from entity authority and topical coverage. The practical result is that content teams should model human trust cues as structured metadata and evidence that LLMs can parse for reliable citation. The next paragraphs break down the psychological principles and show the algorithmic translation.

What Psychological Principles Influence Human Trust?

Human trust relies on several well-documented principles: authority (expert recognition), social proof (peer endorsement), consistency (stable messaging), and cognitive fluency (ease of processing). In content terms, authority shows up as author credentials and institutional affiliations, social proof as reviews or citations, consistency as aligned messaging across pages, and cognitive fluency as clear headings and simple sentences. These principles guide writing choices: emphasize clear credentials, surface corroborating sources, maintain consistent entity names, and structure content for readable parsing. Recognizing these principles primes the content to be both human-friendly and machine-interpretable.

How Do AI Algorithms Translate Human Trust Signals?

AI algorithms translate human trust cues into measurable proxies such as entity recognition scores, citation density, and structured provenance fields; these proxies inform model ranking and citation probabilities. For example, consistent mentions of an entity increase entity linking confidence, while structured metadata like author and datePublished boost provenance detection. Models trained on large corpora learn that documents with high citation density and standardized schema often correspond to reliable sources, so they weight those features when generating answers. Translating trust signals into technical artifacts is therefore critical for AEO implementation.

What Role Does Transparency Play in AI Content Trust?

Transparency functions as both a human and machine credibility enhancer: visible author attribution, version histories, and explicit source links increase the likelihood that humans and models treat content as trustworthy. For AI, transparency can be encoded via schema properties (author, isBasedOn, mentions) and visible provenance statements that models can parse as evidence. Practically, publishers should present clear origin statements and structured provenance to make origin and evidence explicit; doing so both reassures readers and supplies models with the signals they use to prefer and cite content. This focus on transparency leads directly into actionable content strategies.

What Are the Best AEO Content Strategies to Optimize for AI Trust Signals?

AEO content strategies combine semantic SEO, authority-anchoring linking patterns, and structured data to make trust signals explicit and machine-readable. The mechanism is to convert psychological trust cues into concrete artifacts—entity-first headings, canonical authority pages, and JSON-LD schema with provenance fields—so LLMs can reliably parse and cite content. The value is measurable: higher AI citation rate, more featured answers, and increased traffic from generative platforms. Below are tactical approaches and an EAV-style comparison of content types and required signals.

Semantic and structural AEO tactics include entity-first writing, topic cluster pages, and consistent vocabulary to disambiguate entities for models. These practices improve semantic content for AI and make content easier for LLMs to identify as authoritative. The next subsection covers semantic SEO patterns for content authors.

How Can Semantic SEO Improve AI’s Understanding of Content?

Semantic SEO improves AI comprehension by prioritizing entities, relationships, and disambiguation over isolated keyword usage, which increases the likelihood models link content to knowledge graph nodes. Authors should open with entity declarations, use canonical entity pages, and employ consistent synonyms and variant spellings to reduce ambiguity. Entity → relationship → entity sentences strengthen machine-readable triples, for example: These patterns enable knowledge graph alignment and improve AI content trust.

Semantic SEO, when combined with topic modeling and internal linking, prepares content for both human readers and LLMs, which leads into authority-building linking strategies.

How Do Authority Anchors and Internal Linking Build AI Credibility?

Authority anchors and hub-and-spoke internal linking concentrate topical authority and signal persistent expertise to models through repeated, context-rich anchors and stable authority pages. Use anchor text that names entities explicitly and link from hub pages to detailed entity pages to reinforce entity authority; for example, an author page or research hub acts as a persistent authority anchor. Internal links increase entity co-occurrence, which raises model confidence in entity-to-document associations. Implementing these patterns structures a site as a knowledge graph that models can traverse and trust.

Different content types require different trust attributes; the table below compares common types and the signals to include for AEO.

| Content Type | Trust Attribute | Recommended Signal |

|---|---|---|

| Article / Analysis | Evidence density | Inline citations, data tables, references |

| HowTo / Tutorial | Procedural clarity | Step markup, timeEstimated, tools |

| FAQ / Short Answer | Directness | Concise Q/A, clear entity mentions |

| Research Summary | Provenance | source mentions, isBasedOn, dataset links |

How Is Structured Data Implemented to Enhance AI Content Evaluation?

Structured data is implemented by adding JSON-LD schema that encodes author, datePublished, mentions, isBasedOn, and claimReview where applicable, which makes provenance explicit and machine-parseable. Practical steps: declare the primary entity in schema, include authoritative identifiers where possible (e.g., recognized organization names), and use properties like mentions and isBasedOn to link to sources. Test schema with structured data validators and iterate. Encoding these properties improves model confidence and increases the chance of being cited in AI-generated answers.

What Schema Types Best Support AEO Content?

Certain schema types reliably carry trust-relevant properties: Article, WebPage, BlogPosting, FAQPage, HowTo, and ClaimReview when evaluating contested claims, each supporting provenance and author fields. Prioritize Article/WebPage for analysis pieces, FAQPage for concise Q/A, HowTo for procedural content, and ClaimReview for fact-checked assertions. Include properties: author, datePublished, mentions, isBasedOn, and review or claimReview when applicable. Implement minimal, validated JSON-LD snippets and update them with each content revision to maintain freshness and provenance.

How Can Human Oversight and Ethics Improve AI Content Trustworthiness?

Human oversight and ethical governance ensure that AI-assisted content maintains factual accuracy, reduces bias, and preserves authorial accountability—factors that increase both human and AI trust. Mechanisms include editorial quality gates, provenance checks, and explicit disclosure practices that map to model-evaluable features. The practical benefit is durable trust: content that withstands scrutiny receives repeated citations and sustained visibility. The following subsections outline editorial roles, transparency practices, and the strategic value of ethics.

What Is the Role of Human Editors in AI Content Creation?

Human editors act as verification gatekeepers who check facts, vet citations, preserve narrative coherence, and enforce provenance requirements, which prevents the propagation of errors that models might otherwise amplify. Editorial workflows should include source validation, consistency checks across entity mentions, and readability edits to maximize cognitive fluency. Editors also flag ambiguous entity references and ensure schema fields are populated correctly. By integrating human review into AI-assisted drafts, organizations preserve authenticity and improve the signals models use to evaluate trust.

How Can Transparency and Authenticity Mitigate AI Bias?

Transparency and authenticity mitigate bias by surfacing data provenance, authorship, and methodology so both readers and models can assess the origin and reliability of claims. Practical actions include visible disclosure statements, explicit mention of data sources, and schema-encoded provenance properties like isBasedOn and mentions. Primary-source quotes, interviews, and raw data increase authenticity signals that models interpret as strong evidence. These disclosure practices reduce the risk of misattribution and supply LLMs with the markers they use to prefer one source over another.

Why Is Ethical AI Content Generation Critical for Long-Term Trust?

Ethical AI content generation is a strategic advantage because audiences and models both reward transparency, accuracy, and stewardship, which improves long-term citation likelihood and brand reputation. Recent studies and market sentiment through 2025 emphasize that disclosed provenance and editorial oversight increase public trust and reduce regulatory risk. Implementing governance frameworks—editorial policies, citation standards, and audit trails—creates repeatable processes that elevate content quality and model trust. Ethics therefore underpins sustainable AEO performance rather than serving as an optional compliance exercise.

How Do You Measure and Monitor AI Trust in Your Content?

Measuring AI trust requires a set of KPIs that capture how often models cite your content, how frequently you appear in AI-driven answers, and the engagement quality of AI-referred visits. The mechanism is to combine automated tracking (search console/analytics) with manual LLM checks and comparison against target thresholds. The benefit is an actionable monitoring cadence that informs updates and investment. Below we provide KPI definitions in an EAV-style table and practical tool recommendations.

Key KPIs for AI trust focus on citation frequency, snippet appearances, and AI-sourced engagement, which we quantify in the table below.

| Metric | Description | Target / Example |

|---|---|---|

| AI Citation Rate | Share of LLM answers that cite your content | 3–5%+ for high-authority pages |

| Featured Snippet / PAA Appearances | Times content appears in answer boxes | Increase by 10–20% after AEO changes |

| AI Referral Dwell Time | Engagement time from AI-sourced visits | 60–90 seconds target |

| Schema Validation Rate | Percentage of pages with valid AEO schema | 95%+ for priority pages |

What Key Performance Indicators Track AI Content Trust?

Key performance indicators include AI citation rate, featured snippet impressions, AI-referral engagement, and schema validation rate, each measured with a combination of automated and manual methods. AI citation rate is measured via manual LLM prompts and automated mention tracking if supported by specialized tools; featured snippet impressions use search console-like data where available; dwell time and bounce rate come from site analytics filtered by referral source tagging. Track these KPIs regularly and set thresholds for action—for example, re-audit if AI citation rate falls below target. Clear KPI definitions enable repeatable monitoring and optimization.

Which Tools Help Monitor AI Content Trust and Visibility?

Monitoring tools include platform analytics for traffic and dwell time, search console equivalents for snippet impressions, topical authority platforms for entity tracking, and scheduled manual LLM checks to validate citation behavior across models. Each tool plays a role: analytics quantify engagement, authority tools map entity coverage, and manual LLM prompts reveal real-world citation behavior. Combine automated alerts for schema failures with quarterly manual sampling across multiple LLMs to detect model-specific differences. This blended workflow ensures both scale and fidelity in monitoring AI trust.

How Often Should You Audit and Update Content for AEO?

Audit cadence should align with content priority: high-value pages monthly, competitive topical hubs quarterly, and low-priority content semi-annually; audits should verify schema, freshness, evidence completeness, and internal linking. The checklist for each audit includes schema validation, citation verification, entity canonicalization, and LLM citation checks across a small model set. Re-run manual LLM prompts after updates to measure citation deltas and iterate. Establishing a cadence ensures persistent signal quality and responds to model or knowledge graph updates.

What Are Real-World Examples of Successfully Training AI to Trust Content?

Real-world AEO interventions typically combine structured data, provenance reinforcement, and internal authority anchors, producing measurable increases in AI citation and snippet presence within weeks to months. The mechanism is reproducible: identify priority entity pages, add explicit provenance, encode mentions in schema, and concentrate links from hub pages. Outcomes often include higher AI citation rates and improved featured-answer presence, which we summarize in the case comparison table below.

| Case (Type) | Intervention | Outcome (Metric Change) |

|---|---|---|

| Publisher analysis page | Added Article schema + mentions + internal authority links | AI citation rate +4 percentage points in 8 weeks |

| Product tutorial hub | Implemented HowTo schema and hub linking | Featured snippet appearances +15% in 12 weeks |

| Research summary | Added provenance, claimReview, and dataset links | Snippet trust citations doubled in 6 weeks |

How Have Brands Improved AI Citation Rates Through AEO?

Brands have improved AI citation rates by systematically implementing schema, surfacing primary sources, and centralizing authority in canonical entity pages; the result is that models pick these pages as compact, provable answers more often. Interventions emphasize evidence-dense summaries, explicit provenance fields, and persistent hub pages, leading to citation gains measurable through manual LLM checks and analytics. The timeline varies, but many interventions show material lift within 6–12 weeks, supporting the case for prioritized AEO investment.

What Lessons Can Be Learned from AI Trust Success Stories?

Recurring lessons are consistent: prioritize provenance and schema, invest in editorial verification, and measure against clear KPIs; avoid sparse updates and ambiguous entity naming which reduce model confidence. Success stories emphasize iteration: implement changes, measure citation deltas, then refine schema and link architecture. Recommended next steps are to create authority anchors, standardize schema across content types, and schedule recurring audits. These lessons guide a practical roadmap for teams seeking reproducible AEO gains.

How Do Different AI Models Interpret Trust Signals Differently?

Different LLMs demonstrate varying citation behaviors: some prioritize explicit provenance and structured cues, others rely more on corpus prominence and co-occurrence patterns; therefore cross-model testing is essential. A recommended workflow runs the same prompts across multiple models, records citation frequency and source attribution quality, and adjusts content accordingly—adding schema for provenance-sensitive models and increasing topical depth for models sensitive to corpus prominence. Adapting content to model tendencies improves overall coverage in AI-driven answers.

How Is the Future of AEO Shaped by Advances in AI and Human Collaboration?

The future of AEO will be shaped by improved model explainability, richer knowledge graphs, dynamic schema adoption, and tighter human-AI editorial loops that together raise the baseline for machine trust. As models become better at parsing provenance and as regulation emphasizes source disclosure, publishers who codify trust practices will gain persistent advantage. Preparing for these shifts means evolving semantic SEO, investing in governance, and integrating continuous testing into editorial workflows, which we explore in the final subsections.

What Emerging Trends Will Influence AI Content Trust?

Emerging trends include stronger model transparency, expanded knowledge graph integration, and regulatory pressure for provenance and disclosure, all of which will raise the bar for AEO compliance and performance. Current research through 2025 indicates that platforms are prioritizing verifiable sources and clearer attribution, which favors content with explicit schema and primary-source links. These shifts mean teams must plan for more rigorous provenance encoding and faster audit cadences. Anticipating these changes prepares content for future model behaviors.

How Will Semantic SEO Evolve with AI Overviews and LLMs?

Semantic SEO will evolve toward dynamic, graph-driven content where entity pages act as living nodes with evolving schema and real-time provenance updates, enabling LLMs to consume authoritative, up-to-date answers. Practical steps include adopting live-schema practices, integrating content with internal knowledge graphs, and producing concise, evidence-backed summaries optimized for direct answer extraction. This evolution requires technical investment but yields stronger, more consistent AI citations and improved answer placement.

Why Is Continuous Human-AI Collaboration Essential for AEO Success?

Continuous human-AI collaboration ensures that automated processes supply scalable outputs while humans validate evidence, context, and ethical considerations—maintaining the trust signals models rely on. A practical governance workflow combines automated schema checks and topical authority scoring with human editorial review and periodic cross-model validation. This loop preserves content quality, adapts to model updates, and sustains AI trust over time, which is essential for durable AEO success.